dispersion.spat: Spatial Sound and Virtual Acoustics for blended co-present/telematic performance

- Human/Machine Co-Creation in Collective Performance Contexts: From Instruments to Agents

- Deeply Listening Machines

- post-digital-instruments

- Gesture, Intentionality and Temporality in Machine-Mediated Performance

- Electro/Acoustic Comprovisation: Instruments, Identity, Language, Score

- Distributed Performance: Networked Practices and Topologies of Attention

- Distributed Composition: Networked Music and Intersubjective Resonance

- Distributed Listening: Expanded Presence in Telematic Space(s)

- Expanded Listening and Sonic/Haptic Immersion

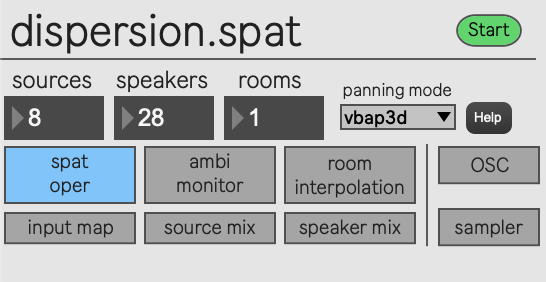

dispersion.spat is an audio spatialization and dynamic virtual acoustics system that allows for geometric control of sound source positions and shared sonic spaces when used in a telematic context. Building upon IRCAM’s Spat 5, the system allows for interpolation between established virtual acoustic spaces defined through perceptual qualities of reverberance, heaviness, liveness, warmth, brilliance, presence, envelopment, and early & cluster reverberation values. Each of these values can be manipulated in a real-time performance, allowing for flexible changes in room qualities and interpolation between established saved spaces. Unique to our approach is the integration of several aspects in one system: controllable spatialization trajectories, interpolation of virtual rooms, a methodology for measuring physical spaces to create new virtual spaces, and potential for telematic connections. This system is used regularly in both local and telematic performance contexts, for example with the Doug Van Nort Electro-Acoustic Orchestra.

Virtual Acoustics Control

To see the outline of establishing virtual acoustics spaces via the dispersion.smk and the use of dispersion.spat in a telematic context, please refer to Virtual Acoustics for Telematic/Co-located Space.

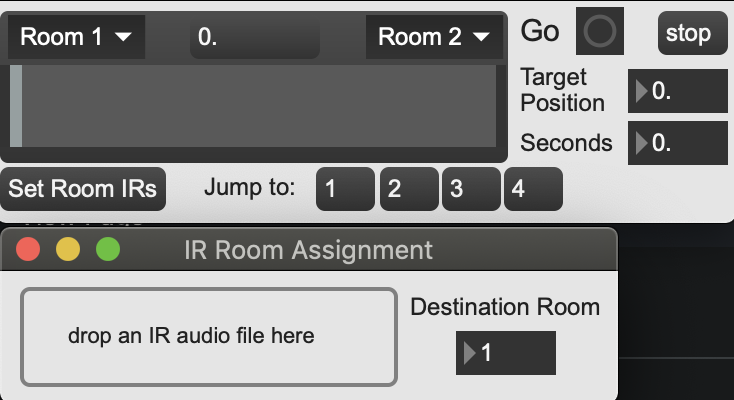

Users can move between established virtual acoustics spaces via the room interpolation sub-patch, allowing for smooth transitions or quick jumps to desired rooms. The top left of the room interpolation patch gives users a slider to manually drag between two chosen spaces (top left and right above the slider). Controlling the slider manually allows for expressive and dynamic control of values and can be used as a performative gesture to “dip” into another room briefly, or coax the values towards a desired room in a non-linear fashion. The floating point number between them displays the percentage towards the right-hand room: 0% being fully set as the left room’s values, 0.5 denoting a 50% interpolation, and 1. as fully set to be the right most room’s parameters.

Users may also set a timed ramp between the chosen rooms. This is achieved through the right side of the sub patch via the target position, seconds, and Go button. The linear ramp will begin at the current location of the slider and move towards the target position (again 0. – 1.) over the designated second amount.

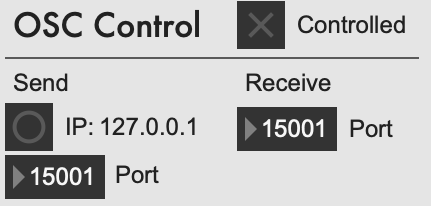

Control of room parameters is done through the Spat Oper packaged with Spat 5, but this has been extended to accommodate remote control via OSC. Remote control of the dispersion.spat patch is done via another instance of the patch on a different computer. This is toggled from the OSC subpatch with the “controlled” check box if the current instance of the patch should be receiving remote control, or if the current instance is desired as a controller, the user would set the target IP and port from this subpatch.

Spatial Trajectories

Additional control of source positions is done through integration of existing geomtric spatial techniques from the GREIS and FILTER systems with externals from the ICST ambisonics Max package. The patch consists of five separate control modules which have options to assign any of the Spat sources to a given geometric or trajectory method.

Unique to our approach, options to “anchor” one source to another and bounding boxes for movement were developed. Bounding is seen in the below example applied to source 3. This effectively sets the anchored source to follow the position of a target, allowing for quick assignment of a “lead source” and n-potential followers.

Sources can also be anchored to another source which is already following a leader, allowing for cascading chains of sources. This is visible with sources 6, 7, and 8 in the animation where 7 and 8 are anchored to source 6 with an offset of 300ms. Various position offsets are also able to be applied, furthering the potential for complex spatial relationships.

Publication

Hoy R., D. Van Nort, “A Technological and Methodological Ecosystem for Dynamic Virtual Acoustics in Telematic Performance Contexts”, in Proc. of Audio Mostly, 2021.